How to protect science against the coming AI-Slopcalypse?

AI slop is flooding scientific papers, figures, and peer reviews. Here's how ReviewerZero detects it across every surface of a manuscript.

You probably remember the biology paper that went viral in early 2024 for its obviously AI-generated rat figure. It became sort of a symbol of a much deeper problem of what was coming.

Over 1% of all scholarly articles published in 2023 (more than 60,000 papers) were substantially assisted by large language models. By 2024, that figure had risen sharply to 20% in computer science and 10% in other fields. A separate analysis of 14 million PubMed abstracts found a sudden spike in LLM-characteristic "style words" like delve, intricate, and commendable, with rates hitting 30% in some sub-corpora. That vocabulary shift was larger than the one caused by the COVID-19 pandemic.

Our defenses aren't keeping up.

The Scale of the Problem

What once required a long time to produce by ghostwriters now it can take minutes with LLMs. They can now generate good introductions, literature reviews, and discussions, and look up appropriate references. The usage goes beyond manuscripts. Liang et al. found that up to 16.9% of peer reviews at major AI conferences were substantially LLM-modified, especially reviews submitted close to deadlines by reviewers reporting lower confidence. By late 2025, Pangram Labs analyzed all 75,800 peer reviews submitted to ICLR 2026 and found that 21% were fully AI-generated, with more than half showing some signs of AI use.

AI-generated figures are harder to catch. Even trained pathologists can't reliably distinguish AI-generated histological images from real tissue samples.

Why Single-Method Detection Falls Short

No single detection method covers the full attack surface. Text-only detectors miss AI-generated images entirely. Metadata-based approaches like C2PA content credentials only work when AI tools embed provenance data, and many don't. Pattern-based phrase detectors catch the obvious mistakes ("As an AI language model") but miss content that's been lightly edited. Statistical vocabulary analysis works at the corpus level but can't reliably flag individual documents.

At ReviewerZero we build a multi-layered defense-in-depth system that covers a full range of possible AI-generated content.

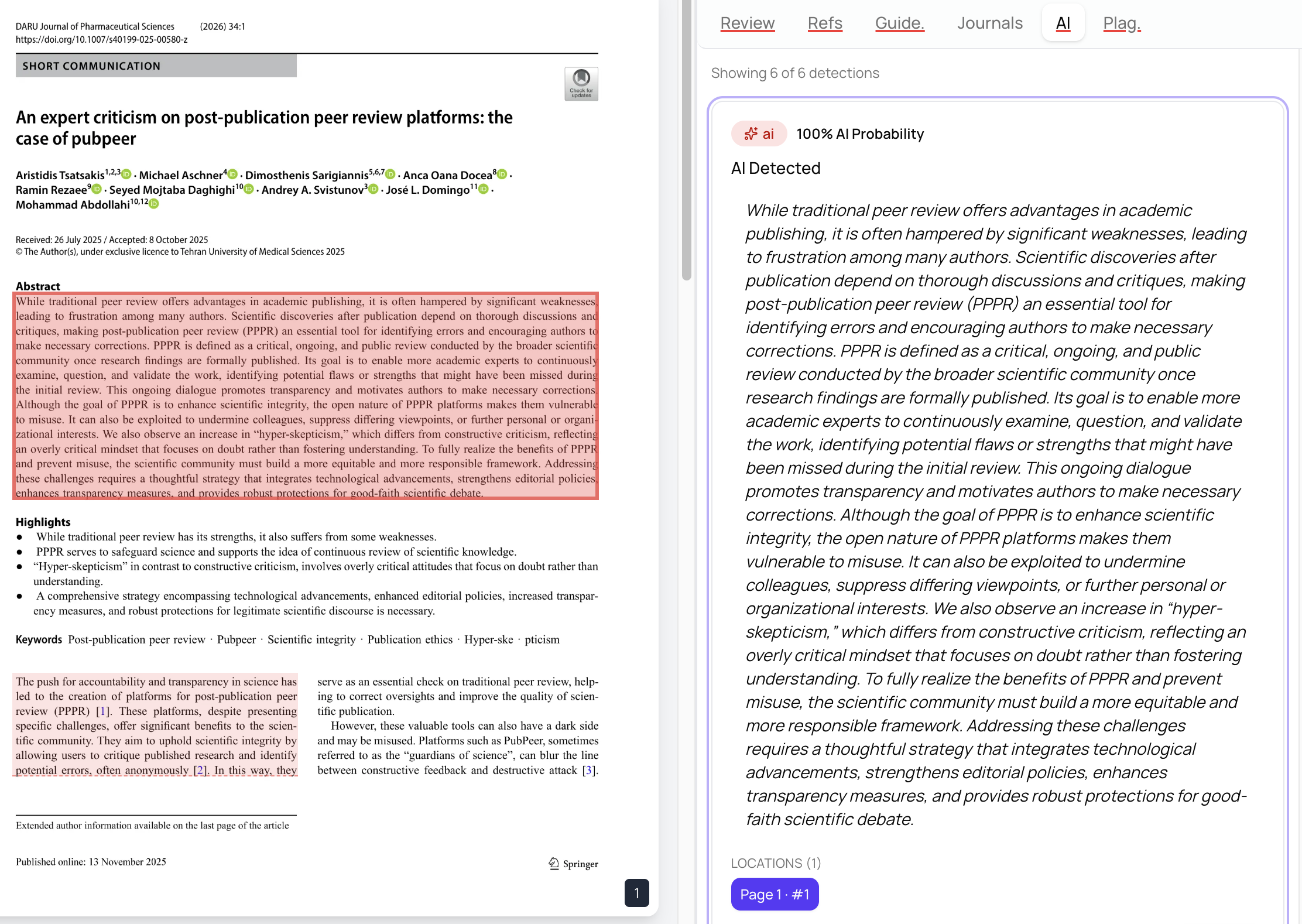

Paragraph-Level Text AI Detection

Using state of the art language models detection models, we analyze the text of a manuscript and can reliably detect AI-generated content at the paragraph level.

A manuscript with one AI-generated methods section and an otherwise human-written discussion will show exactly where the concern lies,An editor can open the AI tab, see the exact passage that triggered the alert, check the model's confidence, and decide whether it reflects acceptable editing assistance or undisclosed machine-generated writing.

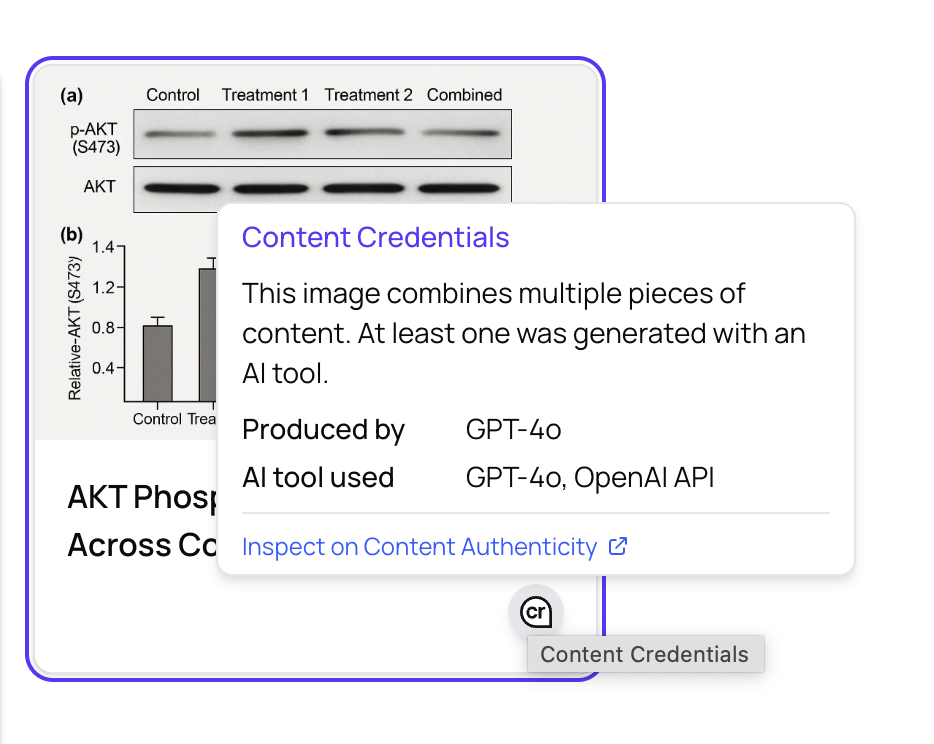

Content Credentials (C2PA) for Images

C2PA (Coalition for Content Provenance and Authenticity) is an open standard founded by Adobe, Microsoft, and others, now supported by Google, OpenAI, and a growing coalition of technology companies. It embeds cryptographic metadata into media files, recording how content was created and modified. When an AI tool like DALL-E or Midjourney generates an image and signs it with C2PA credentials, that provenance travels with the file.

We read and parse these C2PA manifests. When an image carries a valid manifest declaring it was created by an AI tool, it is cryptographically impossible to modify without breaking the signature: you are 100% sure it was created by an AI tool. Learn more about content credentials at contentcredentials.org.

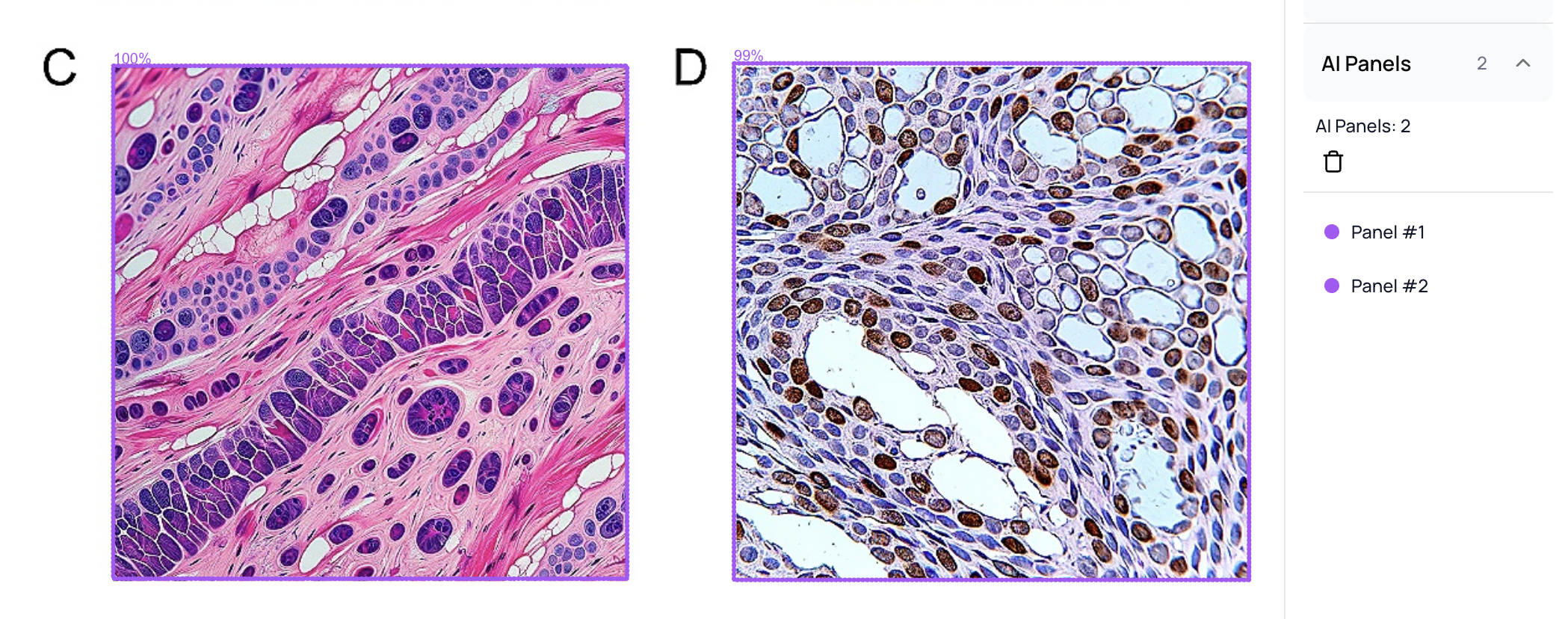

Computer Vision Image Detection

C2PA is valuable, but not all AI-generated images carry provenance metadata. Some generators strip it, some never embedded it, and users can remove it by re-saving in a different format. For this reason, we have developed machine learning models that detect AI-generated images directly from pixel content.

These models operate at the individual panel level within composite figures and at the figure level. These predictions are not perfect, but again given the multiple dimensions of analysis that we perform, they form one more piece of the puzzle.

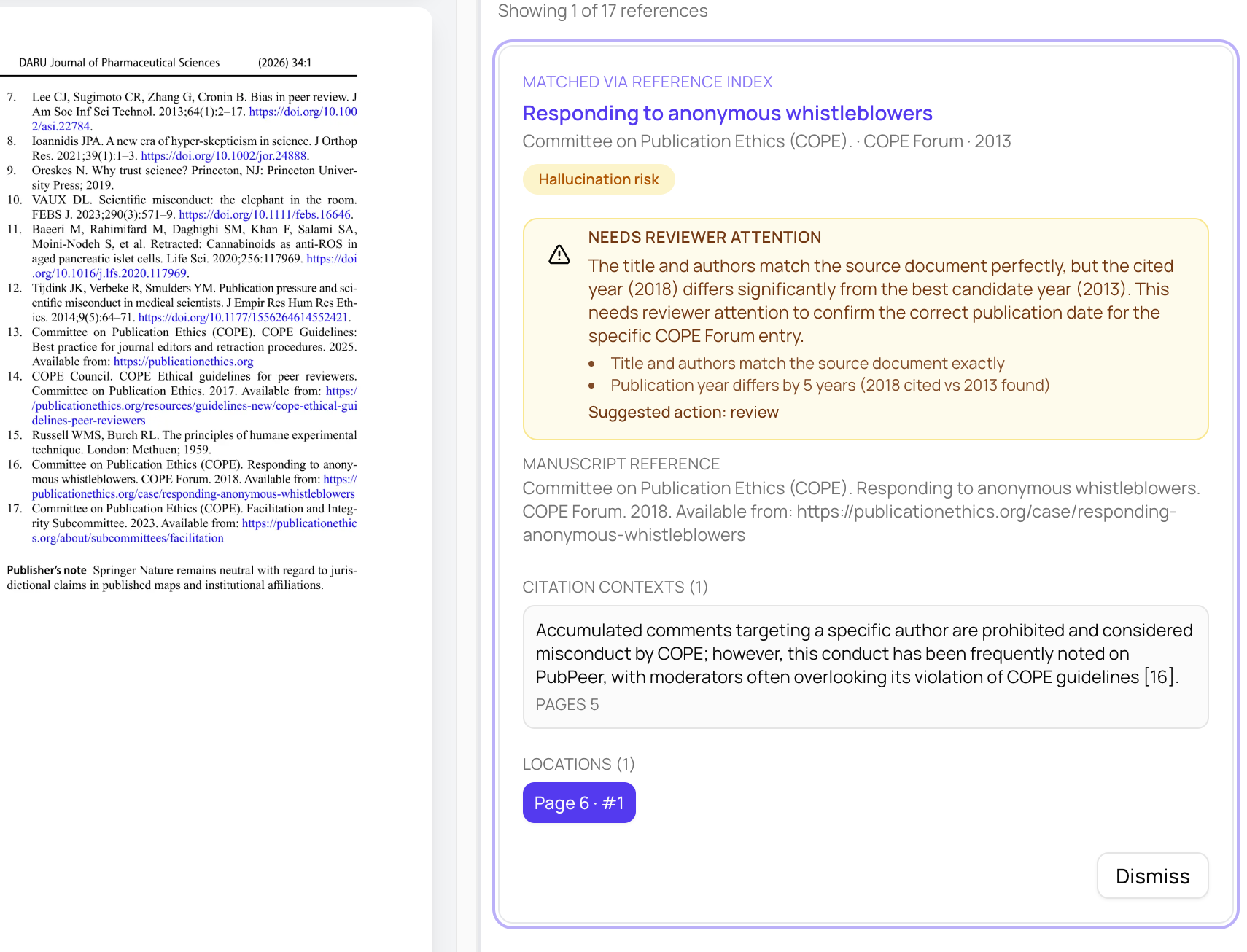

Citation Hallucination Protection

AI-assisted writing frequently introduces a subtler integrity failure: references that look real but contain fabricated years, wrong page numbers, mismatched titles, or entirely invented citations. These errors pass casual inspection while corrupting the scientific record.

We check references against known source records and highlight mismatches that deserve editorial review. We have hundreds of rules to help determine if a reference has a typo or maybe it has a hallucination risk. We also analyze where the reference in used in the manuscript (e.g., the citation context) and determine if it is a good fit or potentially a citation inflation attempt.

Peer Review AI and Review Mill Detection

AI contamination doesn't stop at manuscripts. The trajectory from up to 16.9% AI-modified reviews at major AI conferences in 2024 to 21% fully AI-generated reviews at ICLR 2026 makes the trend clear. A review that was supposed to be a careful expert evaluation may instead have been generated by ChatGPT, or worse, produced by a review mill selling fabricated reviews to authors seeking favorable outcomes.

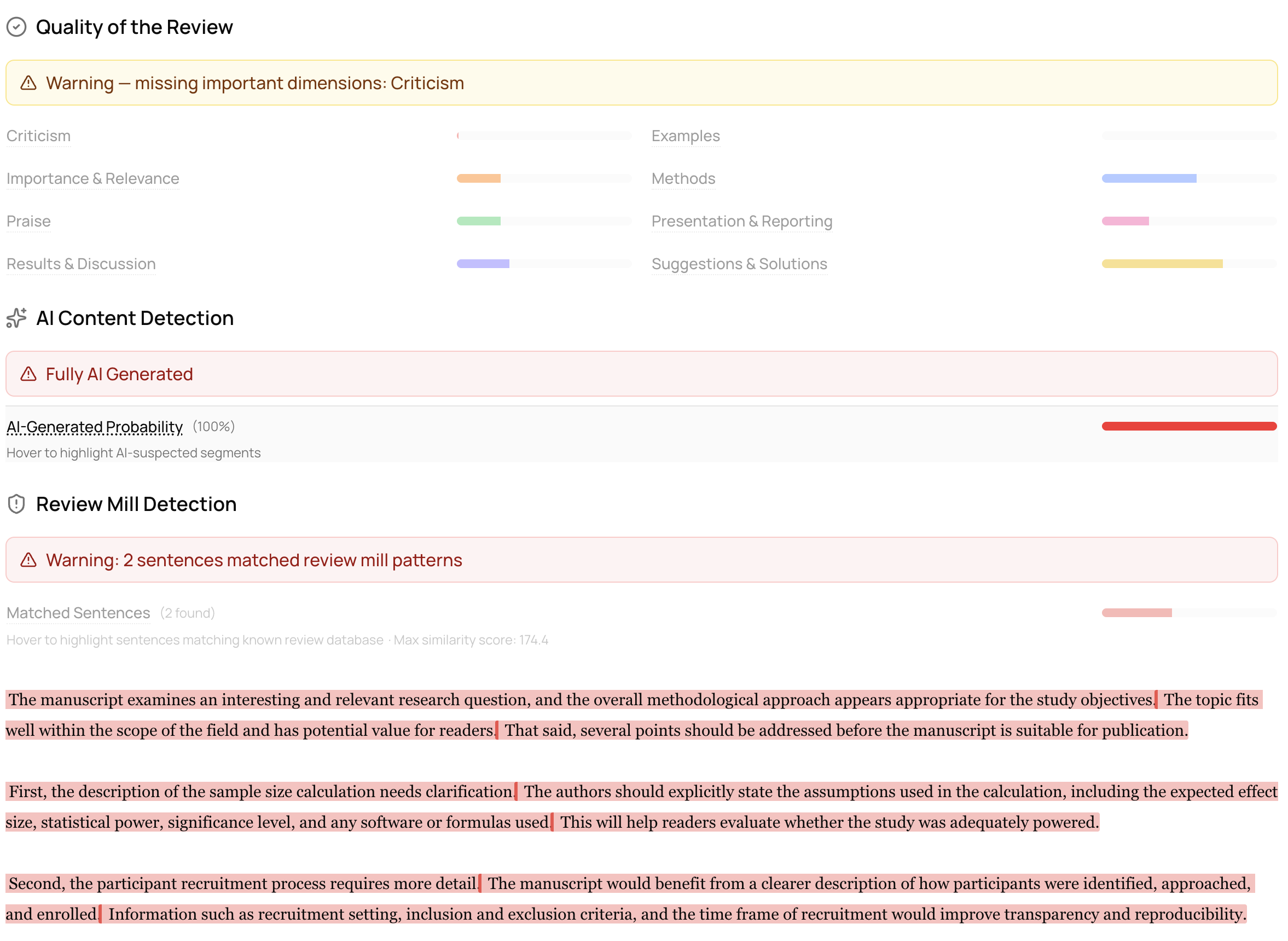

We address this directly. Editors can submit peer review text for the same ML-based detection pipeline we use for manuscripts: each review is scored for AI probability, classified as Human, AI-generated, or Mixed, and broken down at the window level so editors can see exactly which sentences appear machine-generated.

We also run review mill detection that compares review sentences against databases of confirmed review mill content, flagging coordinated boilerplate language, suspicious citation manipulation, and formulaic praise. Each match returns a similarity score and source dataset, giving editors concrete evidence rather than vague suspicion.

- Daniel Acuna, Founder & CEO, ReviewerZero

Get Started

Visit reviewerzero.ai to see the platform in action, explore all capabilities on our features page, or contact us at hi@reviewerzero.ai to discuss how we can support your editorial workflow.